[ad_1]

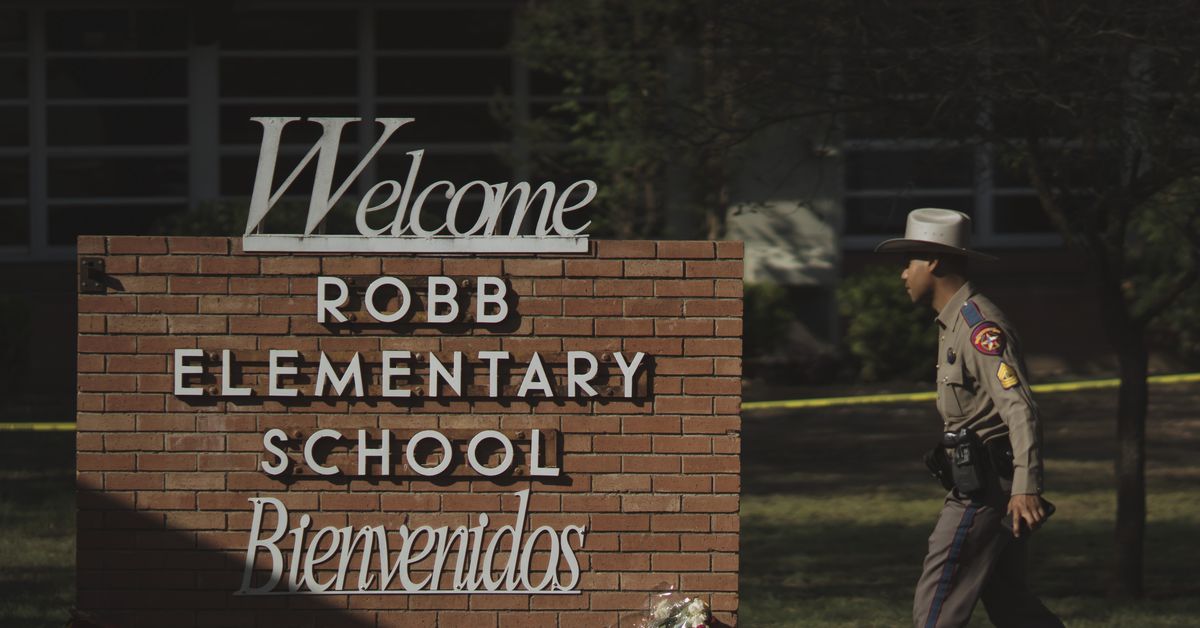

After a shooter killed 21 individuals, together with 19 youngsters, within the bloodbath at Robb Elementary Faculty in Uvalde, Texas, final week, the USA is but once more confronting the devastating influence of gun violence. Whereas lawmakers have to this point didn’t go significant reform, faculties are looking for methods to forestall an analogous tragedy on their very own campuses. Current historical past, in addition to authorities spending data, point out that one of the crucial frequent responses from schooling officers is to put money into extra surveillance expertise.

In recent times, faculties have put in every little thing from facial recognition software program to AI-based tech, together with applications that purportedly detect indicators of brandished weapons and on-line screening instruments that scan college students’ communications for mentions of potential violence. The startups promoting this tech have claimed that these programs will help faculty officers intervene earlier than a disaster occurs or reply extra shortly when one is happening. Professional-gun politicians have additionally advocated for this type of expertise, and argued that if faculties implement sufficient monitoring, they will stop mass shootings.

The issue is that there’s little or no proof that surveillance expertise successfully stops these sorts of tragedies. Specialists even warn that these programs can create a tradition of surveillance at faculties that harms college students. At many faculties, networks of cameras working AI-based software program would be a part of different types of surveillance that faculties have already got, like steel detectors and on-campus cops.

“In an try and cease, let’s say, a shooter like what occurred at Uvalde, these faculties have truly prolonged a price to the scholars that attend them,” Odis Johnson Jr, the chief director of the Johns Hopkins Middle for Protected and Wholesome Faculties, advised Recode. “There are different issues we now have to contemplate once we search to fortify our faculties, which makes them really feel like prisons and the scholars themselves really feel like suspects.”

Nonetheless, faculties and different venues usually flip to surveillance expertise within the wake of gun violence. The 12 months following the 2018 mass capturing at Marjory Stoneman Douglas Excessive Faculty, the native Broward County Faculty District put in analytic surveillance software program from Avigilon, an organization that provides AI-based recognition that tracks college students’ appearances. After the mass capturing at Oxford Excessive Faculty in Michigan in 2021, the native faculty district introduced it will trial a gun detection system offered by ZeroEyes, which is considered one of a number of startups that makes software program that scours safety digicam feeds for pictures of weapons. Equally, New York Metropolis Mayor Eric Adams stated he would look into weapons detection software program from an organization referred to as Evolv, within the aftermath of a mass capturing on the town’s subway system.

Numerous authorities companies have helped faculties buy this type of expertise. Schooling officers have requested funding from the Division of Justice’s Faculty Violence Prevention Program for a wide range of merchandise, together with monitoring programs that search for “warning indicators of … aggressive behaviors,” in line with a 2019 doc Recode acquired via a public data request. And customarily talking, surveillance tech has turn out to be much more outstanding at faculties in the course of the pandemic, since some districts used Covid-19 aid applications to buy software program designed to verify college students had been social distancing and carrying masks.

Even earlier than the mass capturing in Uvalde, many faculties in Texas had already put in some type of surveillance tech. In 2019, the state handed a legislation to “harden” faculties, and inside the US, Texas has probably the most contracts with digital surveillance firms, in line with an evaluation of presidency spending information performed by the Dallas Morning Information. The state’s funding in “safety and monitoring” companies has grown from $68 per scholar to $113 per scholar over the previous decade, in line with Chelsea Barabas, an MIT researcher finding out the safety programs deployed at Texas faculties. Spending on social work companies, nonetheless, grew from $25 per scholar to only $32 per scholar throughout the identical time interval. The hole between these two areas of spending is widest within the state’s most racially various faculty districts.

The Uvalde faculty district had already acquired varied types of safety tech. A kind of surveillance instruments is a customer administration service offered by an organization referred to as Raptor Applied sciences. One other is a social media monitoring instrument referred to as Social Sentinel, which is meant to “determine any potential threats that is likely to be made in opposition to college students and or workers inside the faculty district,” in line with a doc from the 2019-2020 faculty 12 months.

It’s to this point unclear precisely which surveillance instruments could have been in use at Robb Elementary Faculty in the course of the mass capturing. JP Guilbault, the CEO of Social Sentinel’s mum or dad firm, Navigate360, advised Recode that the instrument performs “an necessary function as an early warning system past shootings.” He claimed that Social Sentinel can detect “suicidal, homicidal, bullying, and different dangerous language that’s public and linked to district-, school-, or staff-identified names in addition to social media handles and hashtags related to school-identified pages.”

“We aren’t at the moment conscious of any particular hyperlinks connecting the gunman to the Uvalde Consolidated Unbiased Faculty District or Robb Elementary on any public social media websites,” Guilbault added. The Uvalde gunman did submit ominous pictures of two rifles on his Instagram account earlier than the capturing, however there’s no proof that he publicly threatened any of the faculties within the district. He privately messaged a lady he didn’t know that he deliberate to shoot an elementary faculty.

Much more superior types of surveillance tech tend to overlook warning indicators. So-called weapon detection expertise has accuracy points and may flag all types of things that aren’t weapons, like walkie-talkies, laptops, umbrellas, and eyeglass instances. If it’s designed to work with safety cameras, this tech additionally wouldn’t essentially choose up any weapons which are hidden or coated. As vital research by researchers like Pleasure Buolamwini, Timnit Gebru, and Deborah Raji have demonstrated, racism and sexism may be constructed inadvertently into facial recognition software program. One agency, SN Applied sciences, provided a facial recognition algorithm to 1 New York faculty district that was 16 occasions extra more likely to misidentify Black girls than white males, in line with an evaluation performed by the Nationwide Institute of Requirements and Expertise. There’s proof, too, that recognition expertise could determine youngsters’s faces much less precisely than these of adults.

Even when this expertise does work as marketed, it’s as much as officers to be ready to behave on the knowledge in time to cease any violence from occurring. Whereas it’s nonetheless not clear what occurred in the course of the current mass capturing in Uvalde — partly as a result of native legislation enforcement has shared conflicting accounts about their response — it’s clear that having sufficient time to reply was not the difficulty. College students referred to as 911 a number of occasions, and legislation enforcement waited greater than an hour earlier than confronting and killing the gunman.

In the meantime, within the absence of violence, surveillance makes faculties worse for college students. Analysis performed by Johnson, the Johns Hopkins professor, and Jason Jabbari, a analysis professor at Washington College in St. Louis, discovered that a variety of surveillance instruments, together with measures like safety cameras and gown codes, harm college students’ educational efficiency at faculties that used them. That’s partly as a result of the deployment of surveillance measures — which, once more, hardly ever stops mass shooters — tends to extend the probability that faculty officers or legislation enforcement at faculties will punish or droop college students.

“Given the rarity of faculty capturing occasions, digital surveillance is extra doubtless for use to deal with minor disciplinary points,” Barabas, the MIT researcher, defined. “Expanded use of faculty surveillance is more likely to amplify these developments in ways in which have a disproportionate influence on college students of colour, who’re regularly disciplined for infractions which are each much less severe and extra discretionary than white college students.”

That is all a reminder that faculties usually don’t use this expertise in the best way that it’s marketed. When one faculty deployed Avigilon’s software program, faculty directors used it to trace when one woman went to the toilet to eat lunch, supposedly as a result of they needed to cease bullying. An government at one facial recognition firm advised Recode in 2019 that its expertise was generally used to trace the faces of oldsters who had been barred from contacting their youngsters by a authorized ruling or court docket order. Some faculties have even used monitoring software program to trace and surveil protesters.

These are all penalties of the truth that faculties really feel they need to go to excessive lengths to maintain college students protected in a rustic that’s teeming with weapons. As a result of these weapons stay a outstanding a part of on a regular basis life within the US, faculties attempt to adapt. That usually means college students should adapt to surveillance, together with surveillance that reveals restricted proof of working, and may very well harm them.

[ad_2]

Source link