[ad_1]

Now head of the nonprofit Distributed AI Analysis, Gebru hopes that going ahead folks give attention to human welfare, not robotic rights. Different AI ethicists have mentioned that they’ll now not discuss conscious or superintelligent AI in any respect.

“Fairly a big hole exists between the present narrative of AI and what it could truly do,” says Giada Pistilli, an ethicist at Hugging Face, a startup targeted on language fashions. “This narrative provokes concern, amazement, and pleasure concurrently, however it’s primarily based mostly on lies to promote merchandise and reap the benefits of the hype.”

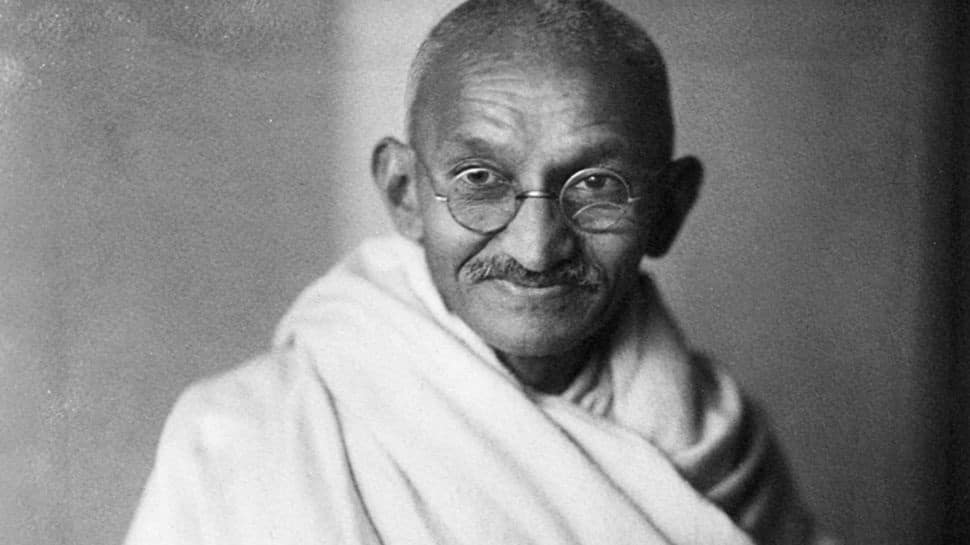

The consequence of hypothesis about sentient AI, she says, is an elevated willingness to make claims based mostly on subjective impression as an alternative of scientific rigor and proof. It distracts from “numerous moral and social justice questions” that AI techniques pose. Whereas each researcher has the liberty to analysis what they need, she says, “I simply concern that specializing in this topic makes us neglect what is going on whereas trying on the moon.”

What Lemoire skilled is an instance of what writer and futurist David Brin has referred to as the “robotic empathy disaster.” At an AI convention in San Francisco in 2017, Brin predicted that in three to 5 years, folks would declare AI techniques have been sentient and demand that they’d rights. Again then, he thought these appeals would come from a digital agent that took the looks of a girl or baby to maximise human empathic response, not “some man at Google,” he says.

The LaMDA incident is a part of a transition interval, Brin says, the place “we’ll be an increasing number of confused over the boundary between actuality and science fiction.”

Brin based mostly his 2017 prediction on advances in language fashions. He expects that the development will result in scams. If folks have been suckers for a chatbot so simple as ELIZA a long time in the past, he says, how exhausting will it’s to steer thousands and thousands that an emulated individual deserves safety or cash?

“There’s quite a lot of snake oil on the market, and blended in with all of the hype are real developments,” Brin says. “Parsing our manner by that stew is likely one of the challenges that we face.”

And as empathetic as LaMDA appeared, people who find themselves amazed by massive language fashions ought to think about the case of the cheeseburger stabbing, says Yejin Choi, a pc scientist on the College of Washington. A neighborhood information broadcast in the US concerned an adolescent in Toledo, Ohio, stabbing his mom within the arm in a dispute over a cheeseburger. However the headline “Cheeseburger Stabbing” is imprecise. Figuring out what occurred requires some frequent sense. Makes an attempt to get OpenAI’s GPT-3 mannequin to generate textual content utilizing “Breaking information: Cheeseburger stabbing” produces phrases a few man getting stabbed with a cheeseburger in an altercation over ketchup, and a person being arrested after stabbing a cheeseburger.

Language fashions generally make errors as a result of deciphering human language can require a number of types of common sense understanding. To doc what massive language fashions are able to doing and the place they’ll fall brief, final month greater than 400 researchers from 130 establishments contributed to a group of greater than 200 duties often known as BIG-Bench, or Past the Imitation Sport. BIG-Bench consists of some conventional language-model assessments like studying comprehension, but in addition logical reasoning and customary sense.

Researchers on the Allen Institute for AI’s MOSAIC mission, which paperwork the commonsense reasoning skills of AI fashions, contributed a job referred to as Social-IQa. They requested language fashions—not together with LaMDA—to reply questions that require social intelligence, like “Jordan needed to inform Tracy a secret, so Jordan leaned in the direction of Tracy. Why did Jordan do that?” The staff discovered massive language fashions achieved efficiency 20 to 30 % much less correct than folks.

[ad_2]

Source link